How to make your code 80 times faster

DISCLAIMER: this is not a silver bullet or a general recipe: it worked in this particular case, it might not work so well in other cases. But I think it is still an interesting technique. Moreover, the various steps and implementations are showed in the same order as I tried them during the development, so it is a real-life example of how to proceed when optimizing for PyPy.

Some months ago I played a bit with evolutionary algorithms: the ambitious plan was to automatically evolve a logic which could control a (simulated) quadcopter, i.e. a PID controller (spoiler: it doesn't fly).

The idea is to have an initial population of random creatures: at each generation, the ones with the best fitness survive and reproduce with small, random variations.

However, for the scope of this post, the actual task at hand is not so important, so let's jump straight to the code. To drive the quadcopter, a Creature has a run_step method which runs at each delta_t (full code):

class Creature(object): INPUTS = 2 # z_setpoint, current z position OUTPUTS = 1 # PWM for all 4 motors STATE_VARS = 1 ... def run_step(self, inputs): # state: [state_vars ... inputs] # out_values: [state_vars, ... outputs] self.state[self.STATE_VARS:] = inputs out_values = np.dot(self.matrix, self.state) + self.constant self.state[:self.STATE_VARS] = out_values[:self.STATE_VARS] outputs = out_values[self.STATE_VARS:] return outputs

- inputs is a numpy array containing the desired setpoint and the current position on the Z axis;

- outputs is a numpy array containing the thrust to give to the motors. To start easy, all the 4 motors are constrained to have the same thrust, so that the quadcopter only travels up and down the Z axis;

- self.state contains arbitrary values of unknown size which are passed from one step to the next;

- self.matrix and self.constant contains the actual logic. By putting the "right" values there, in theory we could get a perfectly tuned PID controller. These are randomly mutated between generations.

At first, I simply tried to run this code on CPython; here is the result:

$ python -m ev.main Generation 1: ... [population = 500] [12.06 secs] Generation 2: ... [population = 500] [6.13 secs] Generation 3: ... [population = 500] [6.11 secs] Generation 4: ... [population = 500] [6.09 secs] Generation 5: ... [population = 500] [6.18 secs] Generation 6: ... [population = 500] [6.26 secs]Which means ~6.15 seconds/generation, excluding the first.

Then I tried with PyPy 5.9:

$ pypy -m ev.main Generation 1: ... [population = 500] [63.90 secs] Generation 2: ... [population = 500] [33.92 secs] Generation 3: ... [population = 500] [34.21 secs] Generation 4: ... [population = 500] [33.75 secs]Ouch! We are ~5.5x slower than CPython. This was kind of expected: numpy is based on cpyext, which is infamously slow. (Actually, we are working on that and on the cpyext-avoid-roundtrip branch we are already faster than CPython, but this will be the subject of another blog post.)

So, let's try to avoid cpyext. The first obvious step is to use numpypy instead of numpy (actually, there is a hack to use just the micronumpy part). Let's see if the speed improves:

$ pypy -m ev.main # using numpypy Generation 1: ... [population = 500] [5.60 secs] Generation 2: ... [population = 500] [2.90 secs] Generation 3: ... [population = 500] [2.78 secs] Generation 4: ... [population = 500] [2.69 secs] Generation 5: ... [population = 500] [2.72 secs] Generation 6: ... [population = 500] [2.73 secs]So, ~2.7 seconds on average: this is 12x faster than PyPy+numpy, and more than 2x faster than the original CPython. At this point, most people would be happy and go tweeting how PyPy is great.

In general, when talking of CPython vs PyPy, I am rarely satified of a 2x speedup: I know that PyPy can do much better than this, especially if you write code which is specifically optimized for the JIT. For a real-life example, have a look at capnpy benchmarks, in which the PyPy version is ~15x faster than the heavily optimized CPython+Cython version (both have been written by me, and I tried hard to write the fastest code for both implementations).

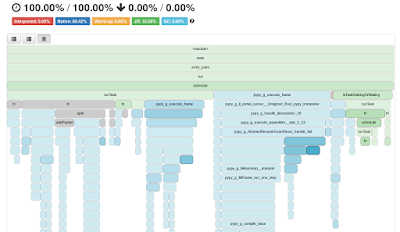

So, let's try to do better. As usual, the first thing to do is to profile and see where we spend most of the time. Here is the vmprof profile. We spend a lot of time inside the internals of numpypy, and allocating tons of temporary arrays to store the results of the various operations.

Also, let's look at the jit traces and search for the function run: this is loop in which we spend most of the time, and it is composed of 1796 operations. The operations emitted for the line np.dot(...) + self.constant are listed between lines 1217 and 1456. Here is the excerpt which calls np.dot(...); most of the ops are cheap, but at line 1232 we see a call to the RPython function descr_dot; by looking at the implementation we see that it creates a new W_NDimArray to store the result, which means it has to do a malloc():

The implementation of the + self.constant part is also interesting: contrary the former, the call to W_NDimArray.descr_add has been inlined by the JIT, so we have a better picture of what's happening; in particular, we can see the call to __0_alloc_with_del____ which allocates the W_NDimArray for the result, and the raw_malloc which allocates the actual array. Then we have a long list of 149 simple operations which set the fields of the resulting array, construct an iterator, and finally do a call_assembler: this is the actual logic to do the addition, which was JITtted indipendently; call_assembler is one of the operations to do JIT-to-JIT calls:

All of this is very suboptimal: in this particular case, we know that the shape of self.matrix is always (3, 2): so, we are doing an incredible amount of work, including calling malloc() twice for the temporary arrays, just to call two functions which ultimately do a total of 6 multiplications and 6 additions. Note also that this is not a fault of the JIT: CPython+numpy has to do the same amount of work, just hidden inside C calls.

One possible solution to this nonsense is a well known compiler optimization: loop unrolling. From the compiler point of view, unrolling the loop is always risky because if the matrix is too big you might end up emitting a huge blob of code, possibly uselss if the shape of the matrices change frequently: this is the main reason why the PyPy JIT does not even try to do it in this case.

However, we know that the matrix is small, and always of the same shape. So, let's unroll the loop manually:

class SpecializedCreature(Creature): def __init__(self, *args, **kwargs): Creature.__init__(self, *args, **kwargs) # store the data in a plain Python list self.data = list(self.matrix.ravel()) + list(self.constant) self.data_state = [0.0] assert self.matrix.shape == (2, 3) assert len(self.data) == 8 def run_step(self, inputs): # state: [state_vars ... inputs] # out_values: [state_vars, ... outputs] k0, k1, k2, q0, q1, q2, c0, c1 = self.data s0 = self.data_state[0] z_sp, z = inputs # # compute the output out0 = s0*k0 + z_sp*k1 + z*k2 + c0 out1 = s0*q0 + z_sp*q1 + z*q2 + c1 # self.data_state[0] = out0 outputs = [out1] return outputsIn the actual code there is also a sanity check which asserts that the computed output is the very same as the one returned by Creature.run_step.

So, let's try to see how it performs. First, with CPython:

$ python -m ev.main Generation 1: ... [population = 500] [7.61 secs] Generation 2: ... [population = 500] [3.96 secs] Generation 3: ... [population = 500] [3.79 secs] Generation 4: ... [population = 500] [3.74 secs] Generation 5: ... [population = 500] [3.84 secs] Generation 6: ... [population = 500] [3.69 secs]This looks good: 60% faster than the original CPython+numpy implementation. Let's try on PyPy:

Generation 1: ... [population = 500] [0.39 secs] Generation 2: ... [population = 500] [0.10 secs] Generation 3: ... [population = 500] [0.11 secs] Generation 4: ... [population = 500] [0.09 secs] Generation 5: ... [population = 500] [0.08 secs] Generation 6: ... [population = 500] [0.12 secs] Generation 7: ... [population = 500] [0.09 secs] Generation 8: ... [population = 500] [0.08 secs] Generation 9: ... [population = 500] [0.08 secs] Generation 10: ... [population = 500] [0.08 secs] Generation 11: ... [population = 500] [0.08 secs] Generation 12: ... [population = 500] [0.07 secs] Generation 13: ... [population = 500] [0.07 secs] Generation 14: ... [population = 500] [0.08 secs] Generation 15: ... [population = 500] [0.07 secs]Yes, it's not an error. After a couple of generations, it stabilizes at around ~0.07-0.08 seconds per generation. This is around 80 (eighty) times faster than the original CPython+numpy implementation, and around 35-40x faster than the naive PyPy+numpypy one.

Let's look at the trace again: it no longer contains expensive calls, and certainly no more temporary malloc() s. The core of the logic is between lines 386-416, where we can see that it does fast C-level multiplications and additions: float_mul and float_add are translated straight into mulsd and addsd x86 instructions.

As I said before, this is a very particular example, and the techniques described here do not always apply: it is not realistic to expect an 80x speedup on arbitrary code, unfortunately. However, it clearly shows the potential of PyPy when it comes to high-speed computing. And most importantly, it's not a toy benchmark which was designed specifically to have good performance on PyPy: it's a real world example, albeit small.

You might be also interested in the talk I gave at last EuroPython, in which I talk about a similar topic: "The Joy of PyPy JIT: abstractions for free" (abstract, slides and video).

How to reproduce the results

$ git clone https://github.com/antocuni/evolvingcopter

$ cd evolvingcopter

$ {python,pypy} -m ev.main --no-specialized --no-numpypy

$ {python,pypy} -m ev.main --no-specialized

$ {python,pypy} -m ev.main

NO_TITLE

(Cape of) Good Hope for PyPy

With the excuse of coming to PyCon ZA during the last two weeks Armin, Ronan, Antonio and sometimes Maciek had a very nice and productive sprint in Cape Town, as pictures show :). We would like to say a big thank you to Kiwi.com, which sponsored part of the travel costs via its awesome Sourcelift program to help Open Source projects.

| Armin, Anto and Ronan at Cape Point |

Armin, Ronan and Anto spent most of the time hacking at cpyext, our CPython C-API compatibility layer: during the last years, the focus was to make it working and compatible with CPython, in order to run existing libraries such as numpy and pandas. However, we never paid too much attention to performance, so the net result is that with the latest released version of PyPy, C extensions generally work but their speed ranges from "slow" to "horribly slow".

For example, these very simple microbenchmarks measure the speed of calling (empty) C functions, i.e. the time you spend to "cross the border" between RPython and C. (Note: this includes the time spent doing the loop in regular Python code.) These are the results on CPython, on PyPy 5.8, and on our newest in-progress version:

$ python bench.py # CPython noargs : 0.41 secs onearg(None): 0.44 secs onearg(i) : 0.44 secs varargs : 0.58 secs

$ pypy-5.8 bench.py # PyPy 5.8 noargs : 1.01 secs onearg(None): 1.31 secs onearg(i) : 2.57 secs varargs : 2.79 secs

$ pypy bench.py # cpyext-refactor-methodobject branch noargs : 0.17 secs onearg(None): 0.21 secs onearg(i) : 0.22 secs varargs : 0.47 secs

The branch tries to fix such nonsense: so far, we fixed only some cases, which are enough to speed up the benchmarks shown above. But most importantly, we now have a clear path and an actual plan to improve cpyext more and more. Ideally, we would like to reach a point in which cpyext-intensive programs run at worst at the same speed of CPython.

- teach the JIT how to look (a bit) inside the cpyext module;

- write specialized code for calling METH_NOARGS, METH_O and METH_VARARGS functions; previously, we always used a very general and slow logic;

- implement freelists to allocate the cpyext versions of int and tuple objects, as CPython does;

- the cpyext-avoid-roundtrip branch: crossing the RPython/C border is slowish, but the real problem was (and still is for many cases) we often cross it many times for no good reason. So, depending on the actual API call, you might end up in the C land, which calls back into the RPython land, which goes to C, etc. etc. (ad libitum).

The other big topic of the sprint was Armin and Maciej doing a lot of work on the unicode-utf8 branch: the goal of the branch is to always use UTF-8 as the internal representation of unicode strings. The advantages are various:

Before you ask: yes, this branch contains special logic to ensure that random access of single unicode chars is still O(1), as it is on both CPython and the current PyPy.

- decoding a UTF-8 stream is super fast, as you just need to check that the stream is valid;

- encoding to UTF-8 is almost a no-op;

- UTF-8 is always more compact representation than the currently used UCS-4. It's also almost always more compact than CPython 3.5 latin1/UCS2/UCS4 combo;

- smaller representation means everything becomes quite a bit faster due to lower cache pressure.

We also plan to improve the speed of decoding even more by using modern processor features, like SSE and AVX. Preliminary results show that decoding can be done 100x faster than the current setup.

In summary, this was a long and profitable sprint, in which we achieved lots of interesting results. However, what we liked even more was the privilege of doing commits from awesome places such as the top of Table Mountain:

Our sprint venue today #pypy pic.twitter.com/o38IfTYmAV— Ronan Lamy (@ronanlamy) 4 ottobre 2017

|

| The panorama we looked at instead of staring at cpyext code |

It was awesome meeting you all, and I'm so stoked about the recent PyPy improvements :-D

Fantastic news. Many Python users need to use some of these many specialized CPython-based extension modules for which there is no CFFI alternative extensively and as a result have not benefited much, or not at all, from PyPy's speed advantages. These improvements could make PyPy the default Python for many of us.

Could you give a hint to how you're doing O(1) individual character access in UTF-8 strings? Not that I'd find such a requirement particularly necessary (might be handy for all-ASCII strings, but easy to flat those cases), but how is it done? I can figure O(log(n)) ways with up to O(n) storage overhead or O(sqrt(n)) with up to O(sqrt(n)) storage overhead, but O(1) w/o the O(n) storage overhead of having UTF-32 around?

Hi Anonymous.

It's O(1) time with O(n) storage overhead, but the constants can be manipulated to have 10% or 25% overhead, and only if ever indexed and not ascii at that.

Really excited to hear about the Unicode representation changes; it should finally make PyPy significantly faster at Unicode manipulation than CPython 3.6 is. It seems this has been bogging down PyPy's advantage at Unicode-heavy workloads like webapp template rendering.

Even without O(1) access to characters by index, I think it's a great idea to use UTF-8 internally, since that's the prevalent encoding for input/output pretty much everywhere. Accessing Unicode characters by index is an antipattern in most situations and UCS-2/UTF-16 is becoming irrelevant.

PyPy v5.9 Released, Now Supports Pandas, NumPy

- NumPy and Pandas now work on PyPy2.7 (together with Cython 0.27.1). Many other modules based on C-API extensions work on PyPy as well.

- Cython 0.27.1 (released very recently) supports more projects with PyPy, both on PyPy2.7 and PyPy3.5 beta. Note version 0.27.1 is now the minimum version that supports this version of PyPy, due to some interactions with updated C-API interface code.

- We optimized the JSON parser for recurring string keys, which should decrease memory use by up to 50% and increase parsing speed by up to 15% for large JSON files with many repeating dictionary keys (which is quite common).

-

CFFI, which is part of the PyPy release, has been updated to 1.11.1,

improving an already great package for interfacing with C. CFFI now supports

complex arguments in API mode, as well as

char16_tandchar32_tand has improved support for callbacks.

- Issues in the C-API compatibility layer that appeared as excessive memory use were cleared up and other incompatibilities were resolved. The C-API compatibility layer does slow down code which crosses the python-c interface often. Some fixes are in the pipelines for some of the performance issues, and we still recommend using pure python on PyPy or interfacing via CFFI.

Please let us know if your use case is slow, we have ideas how to make things faster but need real-world examples (not micro-benchmarks) of problematic code.

Work sponsored by a Mozilla grant continues on PyPy3.5; we continue on the path to the goal of a complete python 3.5 implementation. Of course the bug fixes and performance enhancements mentioned above are part of both PyPy2.7 and PyPy3.5 beta.

As always, this release fixed many other issues and bugs raised by the growing community of PyPy users. We strongly recommend updating.

You can download the v5.9 releases here (note that we provide PyPy3.5 binaries for only Linux 64bit for now):

We would like to thank our donors and contributors, and encourage new people to join the project. PyPy has many layers and we need help with all of them: PyPy and RPython documentation improvements, tweaking popular modules to run on PyPy, or general help with making RPython’s JIT even better.

What is PyPy?

PyPy is a very compliant Python interpreter, almost a drop-in replacement for CPython 2.7 (stdlib version 2.7.13), and CPython 3.5 (stdlib version 3.5.3). It’s fast (PyPy and CPython 2.7.x performance comparison) due to its integrated tracing JIT compiler.We also welcome developers of other dynamic languages to see what RPython can do for them.

The PyPy 2.7 release supports:

- x86 machines on most common operating systems (Linux 32/64 bits, Mac OS X 64 bits, Windows 32 bits, OpenBSD, FreeBSD)

- newer ARM hardware (ARMv6 or ARMv7, with VFPv3) running Linux,

- big- and little-endian variants of PPC64 running Linux,

- s390x running Linux

What else is new?

Cheers, The PyPy team

Good job ! 😀🎉

It would be great if you could update https://packages.pypy.org/

I'm going to donate again ! your work is awesome.

Pypy test run pands two or three times slower than pyhon

df = sparkSession.sql("select * from test_users_dt").toPandas()

for index, row in df.iterrows():

result = 0

for key in range(0, 10000000):

event_type = row.event_type

if key > 234:

result = result + 1

len(event_type + "123")

print(result)

@melin

We know that. We're in the process of improving that by merging various cpyext improvement branches. Stay tuned.

So this means the numpy port for pypy is redundant now right? We can use the original python numpy package?

Let's remove the Global Interpreter Lock

Hello everyone

The Python community has been discussing removing the Global Interpreter Lock for a long time. There have been various attempts at removing it: Jython or IronPython successfully removed it with the help of the underlying platform, and some have yet to bear fruit, like gilectomy. Since our February sprint in Leysin, we have experimented with the topic of GIL removal in the PyPy project. We believe that the work done in IronPython or Jython can be reproduced with only a bit more effort in PyPy. Compared to that, removing the GIL in CPython is a much harder topic, since it also requires tackling the problem of multi-threaded reference counting. See the section below for further details.

As we announced at EuroPython, what we have so far is a GIL-less PyPy which can run very simple multi-threaded, nicely parallelized, programs. At the moment, more complicated programs probably segfault. The remaining 90% (and another 90%) of work is with putting locks in strategic places so PyPy does not segfault during concurrent accesses to data structures.

Since such work would complicate the PyPy code base and our day-to-day work, we would like to judge the interest of the community and the commercial partners to make it happen (we are not looking for individual donations at this point). We estimate a total cost of $50k, out of which we already have backing for about 1/3 (with a possible 1/3 extra from the STM money, see below). This would give us a good shot at delivering a good proof-of-concept working PyPy with no GIL. If we can get a $100k contract, we will deliver a fully working PyPy interpreter with no GIL as a release, possibly separate from the default PyPy release.

People asked several questions, so I'll try to answer the technical parts here.

What would the plan entail?

We've already done the work on the Garbage Collector to allow doing multi- threaded programs in RPython. "All" that is left is adding locks on mutable data structures everywhere in the PyPy codebase. Since it would significantly complicate our workflow, we require real interest in that topic, backed up by commercial contracts in order to justify the added maintenance burden.

Why did the STM effort not work out?

STM was a research project that proved that the idea is possible. However, the amount of user effort that is required to make programs run in a parallelizable way is significant, and we never managed to develop tools that would help in doing so. At the moment we're not sure if more work spent on tooling would improve the situation or if the whole idea is really doomed. The approach also ended up adding significant overhead on single threaded programs, so in the end it is very easy to make your programs slower. (We have some money left in the donation pot for STM which we are not using; according to the rules, we could declare the STM attempt failed and channel that money towards the present GIL removal proposal.)

Wouldn't subinterpreters be a better idea?

Python is a very mutable language - there are tons of mutable state and basic objects (classes, functions,...) that are compile-time in other language but runtime and fully mutable in Python. In the end, sharing things between subinterpreters would be restricted to basic immutable data structures, which defeats the point. Subinterpreters suffers from the same problems as multiprocessing with no additional benefits. We believe that reducing mutability to implement subinterpreters is not viable without seriously impacting the semantics of the language (a conclusion which applies to many other approaches too).

Why is it easier to do in PyPy than CPython?

Removing the GIL in CPython has two problems:

- how do we guard access to mutable data structures with locks and

- what to do with reference counting that needs to be guarded.

PyPy only has the former problem; the latter doesn't exist, due to a different garbage collector approach. Of course the first problem is a mess too, but at least we are already half-way there. Compared to Jython or IronPython, PyPy lacks some data structures that are provided by JVM or .NET, which we would need to implement, hence the problem is a little harder than on an existing multithreaded platform. However, there is good research and we know how that problem can be solved.

Best regards,

Maciej Fijalkowski

Where can we we donate or forward a link to managing directors for corporate donations?

Neither .Net, nor Java put locks around every mutable access. Why the hell PyPy should?

It sounds to me like you are just looking for money to spend. I see no reliable or commercial deliverable coming out of this effort (you listed a bucketload of caveats already). If it were doable in $100k, it would have been done long ago, no? Caveat Emptor to those who toss their money at this.

200+ comments about this article are at: https://news.ycombinator.com/item?id=15008636

@funny_falcon: I don't read this as them arguing for putting "putting locks around *every* mutable access". Rather, just the core shared-mutable pieces of the run-time library and infrastructure, which in .NET and the JVM are provided by the VM itself for Jython and IronPython but which PyPy has to implement.

@scott_taggart: Your vision seems limited. Perhaps you aren't familiar with the PyPy team's strong history of delivering. It may well be 'doable in $100K' but how is that supposed to have spontaneously happened already without a viable plan and a trusted team which is exactly what the PyPy project is?

I always thought the STM concept was really clever and elegant in theory but that the overhead involved, both in recording and rollback-retries, could impact forward progress too much to be viable in practice. Essentially, STM and locks are dual's of each other, with STM having better composition and locks less overhead.

At least with a more traditional locking approach, the locks are still being inserted by the interpreter/library, so they can be reasoned about more carefully (and even instrumented programmatically) to avoid some of the classic problems with lock-based designs whilst regaining the performance lost to STM overhead.

If anyone can pull it off, the PyPy team can :-)

I have been very impressed with the PyPy developers accomplishments to date and sincerely hope that they find corporate sponsors for this worthwhile endeavor.

How can people donate? $50k seems a bargain for such an important achievement. That's pocket change to most moderately sized companies.

Binary wheels for PyPy

Hi,

this is a short blog post, just to announce the existence of this Github repository, which contains binary PyPy wheels for some selected packages. The availability of binary wheels means that you can install the packages much more quickly, without having to wait for compilation.

- numpy

- scipy

- pandas

- psutil

- netifaces

For now, we provide only wheels built on Ubuntu, compiled for PyPy 5.8.

In particular, it is worth noting that they are not manylinux1 wheels, which means they could not work on other Linux distributions. For more information, see the explanation in the README of the above repo.

Moreover, the existence of the wheels does not guarantee that they work correctly 100% of the time. they still depend on cpyext, our C-API emulation layer, which is still work-in-progress, although it has become better and better during the last months. Again, the wheels are there only to save compilation time.

To install a package from the wheel repository, you can invoke pip like this:

$ pip install --extra-index https://antocuni.github.io/pypy-wheels/ubuntu numpy

Very nice. The main reason I can't actively recommend PyPy to others is that I would have to help them install all packages, where for CPython I can just say "conda install foo". Working on efforts like this is extremely useful for the community.

Speaking of which if those were conda packages, that would make it much easier for me. And if pytables and pyyaml worked in pypy (a few years ago they did not and I have no idea what is their current state) and were packaged too, I could finally try pypy on my real projects, and not just toy examples.

PyPy v5.8 released

This new PyPy2.7 release includes the upstream stdlib version 2.7.13, and PyPy3.5 includes the upstream stdlib version 3.5.3.

We fixed critical bugs in the shadowstack rootfinder garbage collector strategy that crashed multithreaded programs and very rarely showed up even in single threaded programs.

We added native PyPy support to profile frames in the vmprof statistical profiler.

The

struct module functions pack* and unpack* are now much faster,

especially on raw buffers and bytearrays. Microbenchmarks show a 2x to 10x

speedup. Thanks to Gambit Research for sponsoring this work.This release adds (but disables by default) link-time optimization and profile guided optimization of the base interpreter, which may make unjitted code run faster. To use these, translate with appropriate options. Be aware of issues with gcc toolchains, though.

Please let us know if your use case is slow, we have ideas how to make things faster but need real-world examples (not micro-benchmarks) of problematic code.

Work sponsored by a Mozilla grant continues on PyPy3.5; numerous fixes from CPython were ported to PyPy and PEP 489 was fully implemented. Of course the bug fixes and performance enhancements mentioned above are part of both PyPy 2.7 and PyPy 3.5.

CFFI, which is part of the PyPy release, has been updated to an unreleased 1.10.1, improving an already great package for interfacing with C.

Anyone using NumPy 1.13.0, must upgrade PyPy to this release since we implemented some previously missing C-API functionality. Many other c-extension modules now work with PyPy, let us know if yours does not.

As always, this release fixed many issues and bugs raised by the growing community of PyPy users. We strongly recommend updating.

You can download the v5.8 release here:

We would like to thank our donors and contributors, and encourage new people to join the project. PyPy has many layers and we need help with all of them: PyPy and RPython documentation improvements, tweaking popular modules to run on PyPy, or general help with making RPython’s JIT even better.

What is PyPy?

PyPy is a very compliant Python interpreter, almost a drop-in replacement for CPython 2.7 and CPython 3.5. It’s fast (PyPy and CPython 2.7.x performance comparison) due to its integrated tracing JIT compiler.We also welcome developers of other dynamic languages to see what RPython can do for them.

The PyPy 2.7 release supports:

- x86 machines on most common operating systems (Linux 32/64 bits, Mac OS X 64 bits, Windows 32 bits, OpenBSD, FreeBSD)

- newer ARM hardware (ARMv6 or ARMv7, with VFPv3) running Linux,

- big- and little-endian variants of PPC64 running Linux,

- s390x running Linux

What else is new?

Cheers, The PyPy team

Can we get a comprehensive update on Numpypy? It has gone really quiet since the days when Alex Gaynor used to talk at Pycon etc about the work which has been going on since what 2010/11? The repo has issues that are not looked at. I would really like an honest appraisal of what was learned in the Numpypy project and what is the future of Numpy (Scipy too) & PyPy because the situation for developers like myself is that we're caught between a rock and a hard place. PyPy consistently allows us to write code and explore algorithms in Python!! Whereas CPython forces you into C/Cython continually. PyPy is a great dream in my heart. What you guys are doing - allowing me to write Python and it be fast. What other language forces you so much to write in another language when performance is a consideration? The speed difference between Node.js and Python 3 is laughable. PyPy for the win!!!!

But....and it's a big but I am one of those devs who extensively is addicted to numeric arrays, not because I'm a 'quant' or an astronomer or rocket scientist but because Numpy arrays are simply better for many solutions than Python's other data structures. And once leveraged, giving that up to go to PyPy is impossible. It forces you to choose between numpy + slower python (CPython) or slower Numpy and faster python (PyPy).

Numpypy was a great dream, the best of both. But it seems to have failed, proven to be too difficult or does it simply need more money? I would appreciate a public update (if one exists, please link to it). Because the sadness for me is that a genuinely fast Python runtime will never be usable until the Numpy/Scipy world works and you get the fast python and as fast numpy.

I would really like to help, raise money whatever but maybe I'm out of the loop and the plan has changed?

Hi Albert. We have decided that a better route is to use upstream NumPy for compatibility. We are a small group, and reimplementing all of the c code in NumPy for Numpypy would be a never ending, close to impossible task.

However, we do have a different long-term plan to combine numpy and python. Since our c-api emulation layer is slow, perhaps we can "hijack" the most common python calls that cross that emulation border and make them fast. This would utilize much of NumPyPy but would mean that only a subset of the extensive NumPy library would need to be implemented and maintained. We have a branch that demonstrates a proof-of-concept for simple item access (ctypedef struct). Help on the PyPy project is always welcome, come to #pypy on IRC and we can discuss it further.

PyPy 5.7.1 bugfix released

Thanks to those who reported issues and helped test out the fixes

You can download the v5.7.1 release here:

What is PyPy?

PyPy is a very compliant Python interpreter, almost a drop-in replacement for CPython 2.7 and CPython 3.5. It’s fast (PyPy and CPython 2.7.x performance comparison) due to its integrated tracing JIT compiler.We also welcome developers of other dynamic languages to see what RPython can do for them.

The PyPy 2.7 release supports:

Please update, and continue to help us make PyPy better.

- x86 machines on most common operating systems (Linux 32/64 bits, Mac OS X 64 bits, Windows 32 bits, OpenBSD, FreeBSD)

- newer ARM hardware (ARMv6 or ARMv7, with VFPv3) running Linux,

- big- and little-endian variants of PPC64 running Linux,

- s390x running Linux

Cheers, The PyPy team

any chance for a Mac OS X PyPy3 distribution?

compilation from sources fails …

thanks for the great work by the way !

Tried looking for a pypy wishlist but couldn't find one. So hopefully somebody reads comments.

My three biggest pypy wishes are for...

1. faster csv file reading by replacing Python library code with compiled C code which I understand from 4 years ago is still slower than cPython so is still on the todo list.

2. Update SQLite to latest version in pypy distribution since they have made some great speed enhancements in recent releases.

3. Create an containerized downloadable Docker distribution for PyPy which allows for easy deployment of PyPy projects to other machines. platforms and thumbs drives. This would also allow easier setup of multiple PyPy microservices and encapsulation of multiple pypy environments on the same machine.

@aiguy: csv is written in C already nowadays. Please report an issue with reproducible examples if you find that PyPy is still a lot slower than CPython at reading large-ish csv files.

For SQLite, I guess you're talking about Windows. We have plans to update it at some point.

For Docker, that's outside the scope of the PyPy team and should be done (or is done already?) by other people.

Native profiling in VMProf

We are happy to announce a new release for the PyPI package vmprof.

It is now able to capture native stack frames on Linux and Mac OS X to show you bottle necks in compiled code (such as CFFI modules, Cython or C Python extensions). It supports PyPy, CPython versions 2.7, 3.4, 3.5 and 3.6. Special thanks to Jetbrains for funding the native profiling support.

What is vmprof?

If you have already worked with vmprof you can skip the next two section. If not, here is a short introduction:

The goal of vmprof package is to give you more insight into your program. It is a statistical profiler. Another prominent profiler you might already have worked with is cProfile. It is bundled with the Python standard library.

vmprof's distinct feature (from most other profilers) is that it does not significantly slow down your program execution. The employed strategy is statistical, rather than deterministic. Not every function call is intercepted, but it samples stack traces and memory usage at a configured sample rate (usually around 100hz). You can imagine that this creates a lot less contention than doing work before and after each function call.

As mentioned earlier cProfile gives you a complete profile, but it needs to intercept every function call (it is a deterministic profiler). Usually this means that you have to capture and record every function call, but this takes an significant amount time.

The overhead vmprof consumes is roughly 3-4% of your total program runtime or even less if you reduce the sampling frequency. Indeed it lets you sample and inspect much larger programs. If you failed to profile a large application with cProfile, please give vmprof a shot.

vmprof.com or PyCharm

- A web service on vmprof.com

- PyCharm Professional Edition

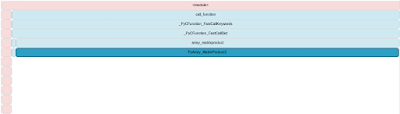

Flamegraph: Accumulates and displays the most frequent codepaths. It allows you to quickly and accurately identify hot spots in your code. The flame graph below is a very short run of richards.py (Thus it shows a lot of time spent in PyPy's JIT compiler).

List all functions (optionally sorted): the equivalent of the vmprof command line output in the web.

Memory curve: A line plot that shows how how many MBytes have been consumed over the lifetime of your program (see more info in the section below).

Native programs

The new feature introduced in vmprof 0.4.x allows you to look beyond the Python level. As you might know, Python maintains a stack of frames to save the execution. Up to now the vmprof profiles only contained that level of information. But what if you program jumps to native code (such as calling gzip compression on a large file)? Up to now you would not see that information.

Many packages make use of the CPython C API (which we discurage, please lookup cffi for a better way to call C). Have you ever had the issue that you know that your performance problems reach down to, but you could not profile it properly? Now you can!

Let's inspect a very simple Python program to find out why a program is significantly slower on Linux than on Mac:

import numpy as np

n = 1000

a = np.random.random((n, n))

b = np.random.random((n, n))

c = np.dot(np.abs(a), b)

Take two NxN random matrix objects and create a dot product. The first argument to the dot product provides the absolute value of the random matrix.

| Run | Python | NumPy | OS | n=... | Took |

| [1] | CPython 3.5.2 | NumPy 1.12.1 | Mac OS X, 10.12.3 | n=5000 | ~9 sec |

| [2] | CPython 3.6.0 | NumPy 1.12.1 | Linux 64, Kernel 4.9.14 | n=1000 | ~26 sec |

Note that the Linux machine operates on a 5 times smaller matrix, still it takes much longer. What is wrong? Is Linux slow? CPython 3.6.0? Well no, lets inspect and [1] and [2] (shown below in that order).

[2] runs on Linux, spends nearly all of the time in PyArray_MatrixProduct2, if you compare to [1] on Mac OS X, you'll see that a lot of time is spent in generating the random numbers and the rest in cblas_matrixproduct.

Blas has a very efficient implementation so you can achieve the same on Linux if you install a blas implementation (such as openblas).

Usually you can spot potential program source locations that take a lot of time and might be the first starting point to resolve performance issues.

Beyond Python programs

It is not unthinkable that the strategy can be reused for native programs. Indeed this can already be done by creating a small cffi wrapper around an entry point of a compiled C program. It would even work for programs compiled from other languages (e.g. C++ or Fortran). The resulting function names are the full symbol name embedded into either the executable symboltable or extracted from the dwarf debugging information. Most of those will be compiler specific and contain some cryptic information.

Memory profiling

We thankfully received a code contribution from the company Blue Yonder. They have built a memory profiler (for Linux and Mac OS X) on top of vmprof.com that displays the memory consumption for the runtime of your process.

You can run it the following way:

$ python -m vmprof --mem --web script.py

By adding --mem, vmprof will capture memory information and display it in the dedicated view on vmprof.com. You can tha view by by clicking the 'Memory' switch in the flamegraph view.

There is more

Some more minor highlights contained in 0.4.x:

- VMProf support for Windows 64 bit (No native profiling)

- VMProf can read profiles generated by another host system

- VMProf is now bundled in several binary wheel for fast and easy installation (Mac OS X, Linux 32/64 for CPython 2.7, 3.4, 3.5, 3.6)

vmprof has not reached the end of development. There are many features we could implement. But there is one feature that could be a great asset to many Python developers.

Continuous delivery of your statistical profile, or in short, profile streaming. One of the great strengths of vmprof is that is consumes very little overhead. It is not a crazy idea to run this in production.

It would require a smart way to stream the profile in the background to vmprof.com and new visualizations to look at much more data your Python service produces.

If that sounds like a solid vmprof improvement, don't hesitate to get in touch with us (e.g. IRC #pypy, mailing list pypy-dev, or comment below)

You can help!

There are some immediate things other people could help with. Either by donating time or money (yes we have occasional contributors which is great)!

- We gladly received code contribution for the memory profiler. But it was not enough time to finish the migration completely. Sadly it is a bit brittle right now.

- We would like to spend more time on other visualizations. This should include to give a much better user experience on vmprof.com (like a tutorial that explains the visualization that we already have).

- Build Windows 32/64 bit wheels (for all CPython versions we currently support)

Richard Plangger (plan_rich) and the PyPy Team

[1] Mac OS X https://vmprof.com/#/567aa150-5927-4867-b22d-dbb67ac824ac

[2] Linux64 https://vmprof.com/#/097fded2-b350-4d68-ae93-7956cd10150c

PyPy2.7 and PyPy3.5 v5.7 - two in one release

This new PyPy2.7 release includes the upstream stdlib version 2.7.13, and PyPy3.5 (our first in the 3.5 series) includes the upstream stdlib version 3.5.3.

We continue to make incremental improvements to our C-API compatibility layer (cpyext). PyPy2 can now import and run many C-extension packages, among the most notable are Numpy, Cython, and Pandas. Performance may be slower than CPython, especially for frequently-called short C functions. Please let us know if your use case is slow, we have ideas how to make things faster but need real-world examples (not micro-benchmarks) of problematic code.

Work proceeds at a good pace on the PyPy3.5 version due to a grant from the Mozilla Foundation, hence our first 3.5.3 beta release. Thanks Mozilla !!! While we do not pass all tests yet, asyncio works and as these benchmarks show it already gives a nice speed bump. We also backported the

f"" formatting from 3.6 (as an exception; otherwise “PyPy3.5” supports the Python 3.5 language).CFFI has been updated to 1.10, improving an already great package for interfacing with C.

We now use shadowstack as our default gcrootfinder even on Linux. The alternative, asmgcc, will be deprecated at some future point. While about 3% slower, shadowstack is much more easily maintained and debuggable. Also, the performance of shadowstack has been improved in general: this should close the speed gap between other platforms and Linux.

As always, this release fixed many issues and bugs raised by the growing community of PyPy users. We strongly recommend updating.

You can download the v5.7 release here:

We would like to thank our donors for the continued support of the PyPy project.

We would also like to thank our contributors and encourage new people to join the project. PyPy has many layers and we need help with all of them: PyPy and RPython documentation improvements, tweaking popular modules to run on pypy, or general help with making RPython’s JIT even better.

What is PyPy?

PyPy is a very compliant Python interpreter, almost a drop-in replacement for CPython 2.7 and CPython 3.5. It’s fast (PyPy and CPython 2.7.x performance comparison) due to its integrated tracing JIT compiler.We also welcome developers of other dynamic languages to see what RPython can do for them.

The PyPy 2.7 release supports:

- x86 machines on most common operating systems (Linux 32/64 bits, Mac OS X 64 bits, Windows 32 bits, OpenBSD, FreeBSD)

- newer ARM hardware (ARMv6 or ARMv7, with VFPv3) running Linux,

- big- and little-endian variants of PPC64 running Linux,

- s390x running Linux

What else is new?

Cheers, The PyPy team

> We also backported the f"" formatting from 3.6 (as an exception; otherwise “PyPy3.5” supports the Python 3.5 language).

Could you also support just the syntax part of variable type declarations? It'll make using mypy that much nicer.

Hello.

Thanks for pypy!

I have a question: Is there any big company who using pypy in production?

Thanks

Great work as usual! Is there any plan to benefit from programs using PEP 484 syntax ?

@Canesin: benefit for performance? No. The PEP itself says "Using type hints for performance optimizations is left as an exercise for the reader". But that's a misleading comment. There is no useful optimization that we can apply from the knowledge "argument 1 is an int", because that could also be an arbitrarily-large integer and/or an instance of a subclass of int. And if it really turns out to be almost always a regular machine-sized integer, then PyPy's JIT will figure it out by itself. PEP 484 is totally pointless for performance. (It is probably useful for other reasons outside the scope of this comment.)

Excellent news! Is PyPy3 support for 32bit Linux planned? Thanks for info.

Miro: yes, we plan to have support for the same set of platforms. The various Posix platforms are not too much work, and Windows will follow, too.

Is there anybody working on win64? It is a bit frustrating to see pypy maturing quickly to the point that I could probably use it soon in production... if only it worked on win64.

Gaëtan: no. We need either outside contributions or, more likely, money to make it happen. Just like what occurred with Mozilla for Python 3.

Leysin Winter Sprint Summary

Why don't you join us next time?

- Planning Session: Tasks from previous days that have seen progress or are completed are noted in a shared document. Everyone adds new tasks and then assigns themselves to one or more tasks (usually in pairs). As soon as everybody is happy with their task and has a partner to work with, the planning session is concluded and the work can start.

- Discussions: A sprint is a good occasion to discuss difficult and important topics in person. We usually sit down in a separate area in the sprint room and discuss until a) nobody wants to discuss anymore or b) we found a solution to the problem. The good thing is that usally the outcome is b).

- Lunch: For lunch we prepare sandwiches and other finger food.

- Continue working until dinner, which we eat at a random restaurant in Leysin.

- Goto 1 the next day, if sprint has not ended.

Sprint Summary

Sprint goals included to work on the following topics:- Work towards releasing PyPy 3.5 (it will be released soon)

- CPython Extenion (CPyExt) modules on PyPy

- Have fun in winter sports (a side goal)

Highlights

- We have spent lots of time debugging and fixing memory issues on CPyExt.

- Fruitful discussions and progress how flesh out some details about unicode representation in PyPy. Our current goal is to use utf-8 as unicode representation internally and have fast vectorized (indexing, check if valid, ...).

- PyPy will participate in GSoC 2017 and we will try to allocate more resources to that than last year.

- Profile and think about some details how to reduce the starting size of the interpreter. The starting point would be to the parser and reduce the amount of strings to ke alive.

- Found a topic for a student's master thesis correctly free cpyext reference cycles.

- Run lots of Python3 code on top of PyPy3 and resolve issues we found along the way.

- Initial work on making RPython thread-safe without a GIL.

Isn't this a factor 80 slowdown because of a design error? Normally, one should store all creatures in a big numpy array and evaluate run_step on all creatures at once.

I don't understand - how do you figure out that line 1232 is not cheap?

@anatoly: line 1232 is a call to descr_dot: if you look at the implementation, you see that it does lots of things including mallocs, and those we know are not cheap at all

Have you tried the third argument of numpy.dot, out to avoid memory alocation?